Performance marketing teams are currently caught in a cycle of diminishing returns regarding generative video. The initial excitement of turning a single sentence into a moving image has been replaced by the sobering reality of production throughput: most AI-generated video is unusable for high-stakes ad spend because it lacks brand consistency or structural integrity.

The industry is currently suffering from what I call the "First-Frame Fallacy." This is the mistaken belief that a sophisticated motion model like Nano Banana Pro can compensate for a mediocre or poorly composed source image. In reality, the motion model is an amplifier. If the source asset contains anatomical errors, lighting inconsistencies, or poor compositional balance, the video generation process will not only replicate those errors but evolve them into distracting artifacts.

To scale performance creative, teams must shift their focus upstream. The real work of video generation happens in the AI Image Editor before a single frame is ever animated. By treating the source image as the "DNA" of the video, operators can significantly reduce their iteration cycles and lower the cost per successful creative asset.

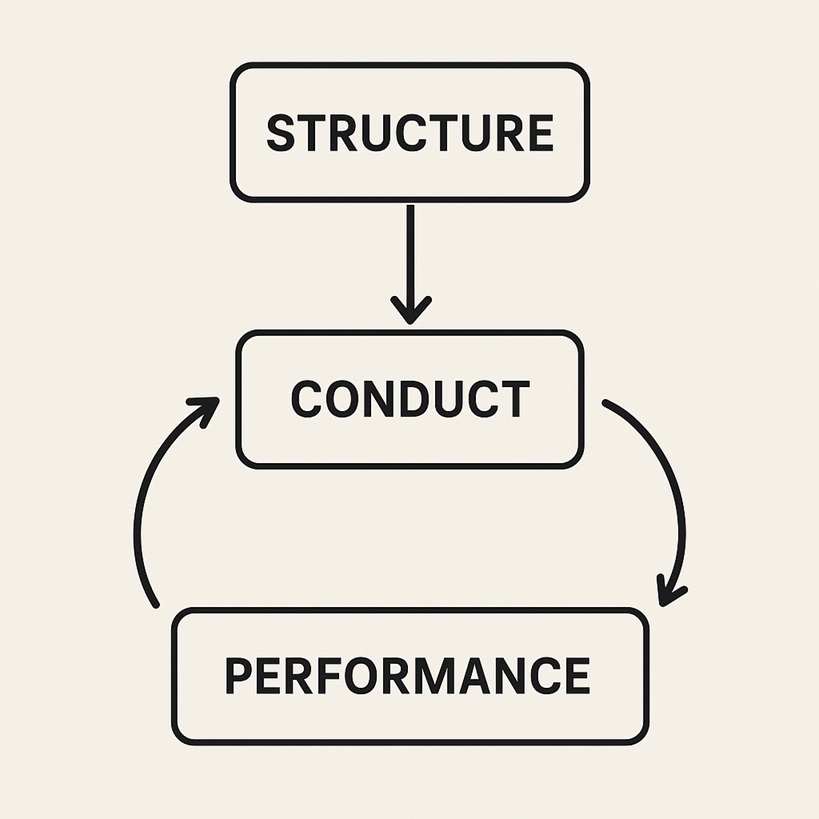

The Structural Dependency of Temporal Consistency

Temporal consistency refers to the ability of an AI model to maintain the identity and shape of objects across time. When you use Nano Banana to animate a product, the model looks at the first frame to understand depth, texture, and boundary definitions.

If that first frame is "muddy"—meaning the edges of the product bleed into the background—the motion algorithm will struggle to differentiate the subject from its environment. This results in "morphing," where the product seems to melt into the scenery as it moves. Within the Banana AI ecosystem, the highest success rates come from source images with high contrast and clearly defined subjects.

From a systems-minded perspective, the motion model is performing a series of sophisticated interpolations. It is essentially guessing where pixels should move based on the data provided in the initial state. If the initial state is 1024x1024 but lacks sharp detail, the model has to fill in the gaps. This is where most performance marketers lose money: they spend GPU credits on "fixing" bad videos when they should have spent five minutes refining the source image in Banana Pro first.

Why the AI Image Editor is the Most Important Video Tool

It seems counterintuitive, but the quality of your video workflow is governed by your image editing capabilities. When preparing an asset for Nano Banana Pro, the "raw" output from a text-to-image prompt is rarely sufficient.

There are three specific areas where the AI Image Editor saves a video production:

➔ Anatomical and Geometric Correction: If a generated character has a six-fingered hand or a product has a warped logo, the video model will try to animate those errors. The result is often grotesque or brand-damaging. Correcting these in the static frame is mandatory.

➔ Lighting Homogeneity: Motion models interpret shadows as depth. If your source image has conflicting light sources, the motion model may generate "flicker" as it tries to reconcile those shadows during movement.

➔ Edge Cleanliness: For products, the background-to-foreground separation must be distinct. Using tools to sharpen edges or replace busy backgrounds with cleaner environments allows the motion engine to focus on the intended kinetic path.

One significant limitation of current generative workflows—including those using high-end models—is the "drift" that occurs in textures. Even with a perfect source frame, complex patterns like lace or fine wood grain tend to "crawl" or vibrate. While a better source frame minimizes this, it is an inherent technical ceiling that marketers must account for by choosing textures that the AI handles more gracefully, like solid surfaces or soft gradients.

Compositional Logic in Nano Banana Pro

The way you frame your source image dictates the "kinetic potential" of the video. If you place a subject too close to the edge of the frame, Nano Banana Pro will have no "padding" to work with when it tries to pan or zoom.

For performance marketers, the 9:16 aspect ratio is the standard. However, generating a 9:16 image and then animating it often leads to disappointing results because the vertical space is so constrained. A more effective workflow involves generating a wider composition, refining it in the AI Image Editor, and then using the "Motion Brush" or directional prompts to control the movement within that space.

Nano Banana Pro operates best when there is a clear "lead-in" for motion. If you want a camera to move forward, the composition should have a clear vanishing point. If you want a subject to move across the frame, there should be "negative space" for them to move into. Ignoring these basic rules of cinematography results in videos where the motion feels cramped, jittery, or physically impossible.

The Limitations of the 'Fix It in Post' Mentality

In traditional film, "fix it in post" refers to using VFX to correct mistakes made on set. In the AI era, some creators try to "fix it in the prompt." They take a low-quality image and write a 200-word prompt for the video generator, hoping the AI will add the missing detail.

This rarely works. The prompt weight is often split between following the visual cues of the image and following the linguistic instructions of the prompt. When these two sources of truth conflict—for example, a prompt asking for "high detail" on a blurry image—the model creates "hallucinations." These appear as digital noise, strange flickering artifacts, or sudden changes in the character’s appearance mid-video.

There is also a notable uncertainty in how different models handle "atmospheric" prompts. Adding "smoke" or "particles" to a video prompt can sometimes obscure the product entirely because the AI prioritizes the movement of the smoke over the stability of the subject. A more disciplined approach involves ensuring the source frame is as high-resolution as possible before clicking 'Generate.'

Strategic Implementation for Performance Teams

For a creative team looking to output 50 variations of an ad per week, the workflow must be modular.

➔ Template Generation: Create a high-quality "Master Frame" using Banana AI tools. This frame should be brand-compliant and high-resolution.

➔ Asset Modification: Use the AI Image Editor to swap out products, change background colors, or adjust the ethnicity of a model while keeping the same basic composition.

➔ Controlled Animation: Run these variations through Nano Banana Pro using a consistent set of motion parameters (e.g., Motion Bucket 5, Zoom 1.2).

This systems-based approach ensures that the "look and feel" of the brand stays consistent even as the specific creative elements change. It moves the operator from a position of "hoping for a good result" to "directing a predictable output."

Managing Expectation: Where Source Control Still Fails

It is important to be realistic: source control is not a silver bullet. Even with a perfect 4K source frame, certain types of motion remain incredibly difficult for current AI architectures.

For instance, "occlusion"—where one object passes behind another—is a common failure point. If your source image features a complex foreground, the AI may struggle to "re-render" the background once the foreground object moves. This results in a "smearing" effect where the background pixels seem to follow the foreground object.

Similarly, rapid human movement remains a challenge. A high-quality static image of a runner does not guarantee a realistic running animation; the AI often struggles with the physics of limb placement and weight distribution. In these cases, the limitation isn't the image—it's the current state of temporal physics in generative models. Knowing these boundaries allows a production team to avoid "impossible" shots and stick to motion that the AI can execute with high fidelity, such as slow pans, gentle movements, and environmental shifts.

The Commercial Path Forward

The goal of using Nano Banana Pro in a commercial context is not to create a feature film; it is to create a pattern interrupt that stops the scroll. To achieve this at scale, the focus must remain on the integrity of the input.

By mastering the AI Image Editor and understanding the relationship between composition and kinetic potential, teams can finally move past the "lottery" phase of AI video. They can build repeatable pipelines where the source asset is treated as a rigorous technical specification rather than a rough suggestion.

In the end, the "First-Frame Fallacy" costs time and money. By investing in the quality of the static frame, creators aren't just making better images—they are building the foundation for stable, high-performance video creative that actually converts. The tools within the Banana Pro suite are designed for this exact synergy, allowing for a seamless transition from a refined static concept to a professional-grade motion asset. Moving forward, the most successful AI creators won't be those who can write the longest prompts, but those who can most effectively control the source pixels.